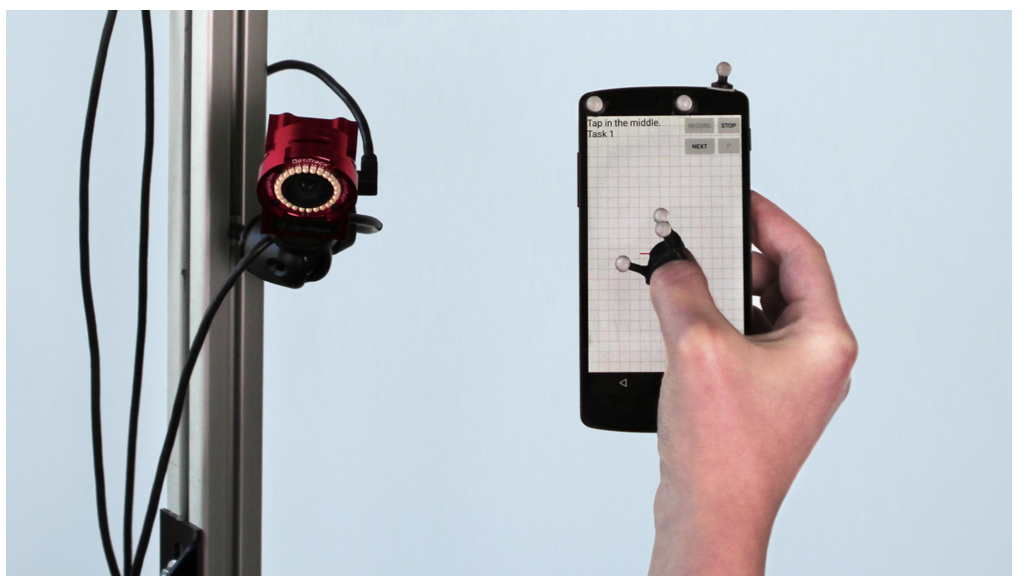

ThumbPitch: Enriching Thumb Interaction on Mobile Touchscreens using Deep Learning

Jamie Ullerich, Maximiliane Windl, Andreas Bulling, Sven Mayer

ACM Proceedings of the 34st Australian Conference on Human-Computer-Interaction (OzCHI), pp. 1–9, 2022.

Abstract

Today touchscreens are one of the most common input devices for everyday ubiquitous interaction. Yet, capacitive touchscreens are limited in expressiveness; thus, a large body of work has focused on extending the input capabilities of touchscreens. One promising approach is to use index finger orientation; however, this requires a two-handed interaction and poses ergonomic constraints. We propose using the thumb’s pitch as an additional input dimension to counteract these limitations, enabling one-handed interaction scenarios. Our deep convolutional neural network detecting the thumb’s pitch is trained on more than 230,000 ground truth images recorded using a motion tracking system. We highlight the potential of ThumbPitch by proposing several use cases that exploit the higher expressiveness, especially for one-handed scenarios. We tested three use cases in a validation study and validated our model. Our model achieved a mean error of only 11.9°.Links

Paper: ullerich22_ozchi.pdf

BibTeX

@inproceedings{ullerich22_ozchi,

author = {Ullerich, Jamie and Windl, Maximiliane and Bulling, Andreas and Mayer, Sven},

title = {ThumbPitch: Enriching Thumb Interaction on Mobile Touchscreens using Deep Learning},

booktitle = {ACM Proceedings of the 34st Australian Conference on Human-Computer-Interaction (OzCHI)},

year = {2022},

pages = {1--9},

doi = {10.1145/3572921.3572925}

}