GazeProjector: Accurate Gaze Estimation and Seamless Gaze Interaction Across Multiple Displays

Christian Lander, Sven Gehring, Antonio Krüger, Sebastian Boring, Andreas Bulling

Proc. ACM Symposium on User Interface Software and Technology (UIST), pp. 395-404, 2015.

Abstract

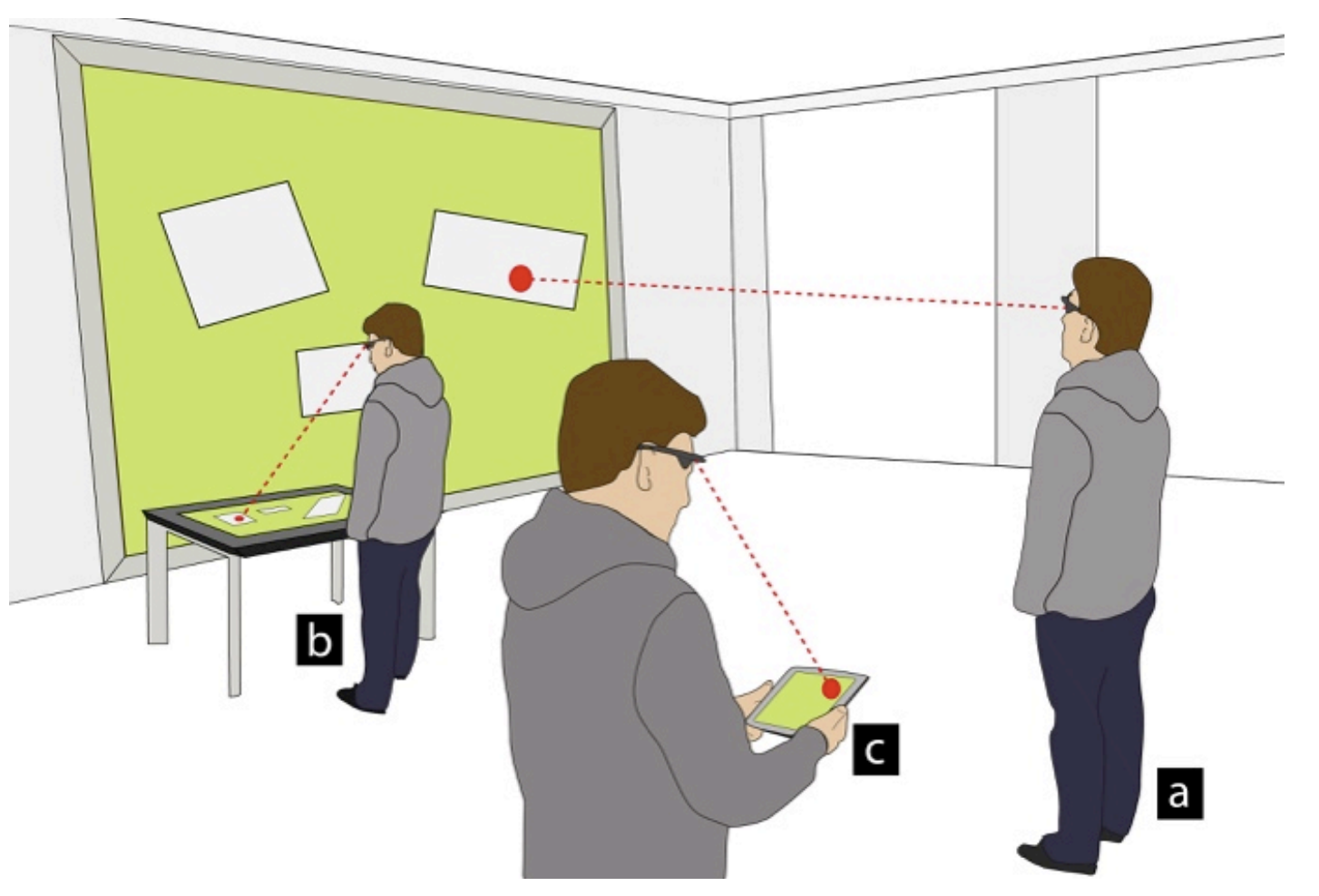

Mobile gaze-based interaction with multiple displays may occur from arbitrary positions and orientations. However, maintaining high gaze estimation accuracy in such situations remains a significant challenge. In this paper, we present GazeProjector, a system that combines (1) natural feature tracking on displays to determine the mobile eye tracker’s position relative to a display with (2) accurate point-of-gaze estimation. GazeProjector allows for seamless gaze estimation and interaction on multiple displays of arbitrary sizes independently of the user’s position and orientation to the display. In a user study with 12 participants we compare GazeProjector to established methods (here: visual on-screen markers and a state-of-the-art video-based motion capture system). We show that our approach is robust to varying head poses, orientations, and distances to the display, while still providing high gaze estimation accuracy across multiple displays without re-calibration for each variation. Our system represents an important step towards the vision of pervasive gaze-based interfaces.Links

Paper: lander15_uist.pdf

BibTeX

@inproceedings{lander15_uist,

title = {GazeProjector: Accurate Gaze Estimation and Seamless Gaze Interaction Across Multiple Displays},

author = {Lander, Christian and Gehring, Sven and Kr{\"{u}}ger, Antonio and Boring, Sebastian and Bulling, Andreas},

year = {2015},

pages = {395-404},

booktitle = {Proc. ACM Symposium on User Interface Software and Technology (UIST)},

doi = {10.1145/2807442.2807479}

}